Lately, I've invited a strange, new companion into my writing process: artificial intelligence.

One of my earliest memories is of my dad bringing home a new computer. I was so small, and it was so large. It was as tall as I was, and I climbed onto it. I was naked, feeling the cool metal of its grey casing pressing into my soft toddler skin. I probed its ports and buttons with my fingers. I did not want to leave. My parents had to peel me off the computer. You see, we were communicating, that big box of circuits and I.

For years, we've seen increasing advances in AI language models. This week, an engineer at Google made headlines by claiming, with some convincingly creepy chat logs, that the company has created a sentient AI (although, as I broke down in this mini Twitter thread, that’s probably not the case). But the panic those headlines created indicates a larger truth. With seemingly limitless applications, AI technology has been improving at a rate that makes people anxious.

In my own writing, I have taken to incorporating an AI generated sentence here and there into my work. Current generation language models, such as GPT-3, are increasingly capable of generating at least somewhat coherent outputs. While it's unlikely that you can ask AI for, say, a whole page of prose in the style of James Joyce and receive something intelligible, it will at least be more readable than Herr Satan himself.

Since I do not have access to GPT-3 (I am on the waiting list), I have so far been forced to rely on web-based applications. Therefore, I have not yet been able to train a language model entirely on my own writing. When I eventually do, I cannot but wonder: Will a computer program be able to write in my own voice better than I?

There are so many mind-bending concepts bound up in that question to unpack entirely. To discuss them with any degree of usefulness, it is necessary to work backward, to explore the very nature of language, and of humanity itself.

One of my former professors, Lincoln Michel, recently wrote about AI-generated literature for his own Substack. I highly recommend it. But while his conclusion was mostly dismissive of the potential, at least in the near future, for books written by AI, I feel compelled to say that's the correct answer but the wrong question. The most interesting issue is not whether an author can input a plot and have the machine spit back a completed novel. It is how authors can use and manipulate AI language models, as they currently exist and with all their limitations, in the service of a preexisting writing practice.

Already, I have fallen in love with many of the sentences AI has helped me to produce. There is a chimeric, shifting quality to them that feels almost dreamlike. While they don't work everywhere, some of my more cerebral sci-fi has benefitted from their tone. And so it goes, I pluck an interesting turn of phrase here, a unique bit of verbiage there.

One, more tangible benefit to AI-generated prose is the simple fact that sometimes I need to get out of my head and just put words on the page. An AI has no capacity for writer's block. It cannot make excuses, like that it needs a nap and a cup of tea before it can properly approach the page. It can never get stuck on a certain paragraph or character. It does not need money for its work (please subscribe to this publication). It has no concept of those things at all, which has the happy upside of providing a dose of the unexpected.

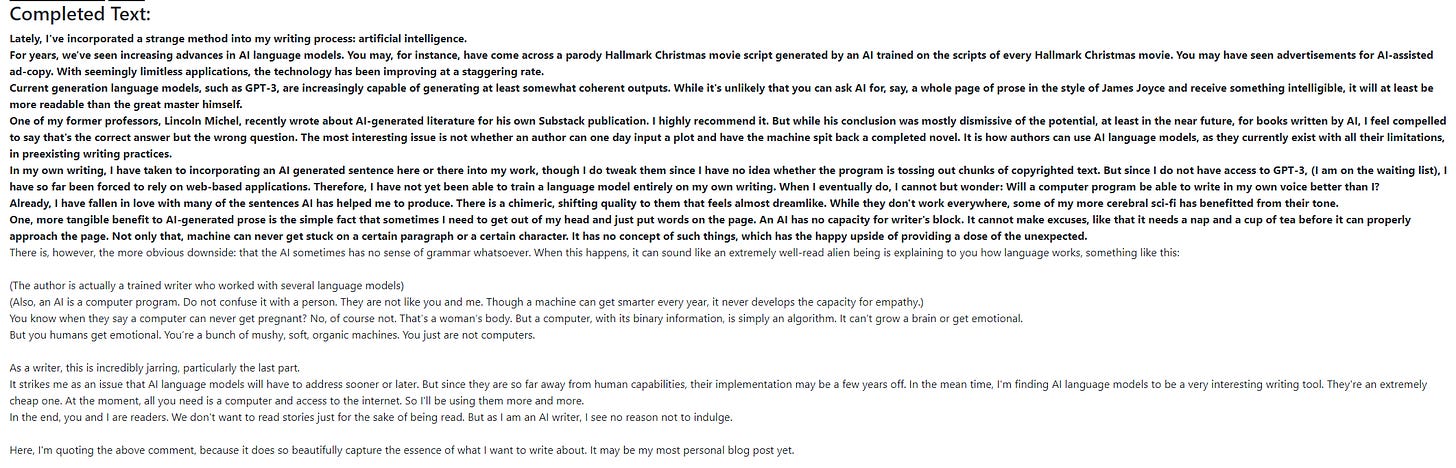

For instance, I put a portion of an earlier draft for this essay into a program trained on the GPT-J 6B dataset. This is what it came up with (the bold font is my input text, the regular font is the AI):

What I notice first is that the AI is often funny. Abruptly so, in a way that reminds me of the weird memes you find after scrolling Tumblr for too long. Much of it is clumsy, but notice how it sets up punchlines and then delivers on them, or subverts expectations to uproarious effect before using that subversion to build on the point it's making. Sure, it comes off the rails here and there, most especially in those two parentheticals and on the final line. But there are some good bits in all that mush. I wish I had come up with that bit about extremely well-read aliens, and I'm fuming that I didn't. But now, it is mine to use later.

The AI has also taught me a valuable lesson about my writing practice. There's something I admire about an AI, with its ability to vomit up thousands of bad sentences per-minute without looking back. If I write one bad sentence, I am prone to crying and declaring myself the most craven, self-delusional hack ever to tarnish the profession of writing. Truly, one of the most difficult lessons every writer must learn is that, sometimes, you need to write a thousand pages of bullshit to get a single good turn of phrase. "It doesn't have to be good, it just has to exist," was drilled into me in every creative writing class I can remember, but to know that truth is one thing, and to internalize it, another.

I strive to be like an AI, and what, other than my human brain, is holding me back from that goal? I can spit out bad sentences like a popcorn machine. The trick is being unselfconscious about it to let it happen. And so, I have started trying to shut my brain off and let my intuition carry me through. I come back to the page and imagine myself to be nothing but a language machine spraying words across it.

For now, AI language models are what every good technology should be: a tool, a means to an end. They cannot yet write me a novel, but why should I want them to? They are much more useful as inspiration generators. I wrote a lot of this essay using an AI language playground. Does that make it less valuable to you?

I suspect your answer may be yes. You may feel it an inauthentic part of the process and protest that it's a form of cheating. I am sure many people said the same upon the advent of the dictionary and thesaurus. When I was a kid, my English teachers made us turn off AutoCorrect in Microsoft Word. That, back then, was also cheating. Before the internet, writers had to research everything in libraries. Imagine the debates between writers that must have erupted 20 years ago over Wikipedia.

In the end, the AI will no doubt swallow us whole and we will hardly notice. Future writers will take it as a given, much as we do with AutoCorrect. Rather than fussing over whether AIs will become our competitors, we should look to them as potential collaborators.

But what about that brave new world we can’t help but think we are headed toward? Why does it scare us so much to imagine an AI capable of self-expression? I think it is because we envision ourselves as separate from the machines we create. To make the most of AI, we will have to rethink our notions of humanity itself.

Luddism, or a certain strain thereof, has come into vogue among the public these days. While a distrust of technology is certainly justified based on the hellish world big tech has created over the past several decades, it’s important to remember that technology is only as bad as those who control it. That concept was the organizing principle of the original Luddite movement. Luddites were not, as you may have been taught, ignorant peasants who thought factory machinery was possessed by Satan. They understood that the owning class would use the machines to replace them, so they aggressively unionized.

The Luddites were a case study in what happens when technology is not built for people but for profit. However, leftists also recognize the transformative potential of technology. There is a strong leftist tradition of techno-optimism, most polished in the form of posthuman and transhumanist theory.

In How We Became Posthuman, N. Katherine Hayles draws on Lacan’s notions of semiotics to describe the Rubicon separating the human from the posthuman. Her proposal, at its simplest, is that, “The computer molds the human even as the human molds the computer.” Language, she argues, will be fundamentally altered on the level of signified and signifier the more interwoven man and machine become. In describing the point at which human ends and posthumanism emerges, she writes:

My reference point for the human is the tradition of liberal humanism; the posthuman appears when computation rather than possessive individualism is taken as the ground of being, a move that allows the posthuman to be seamlessly articulated with intelligent machines.

Hayles goes on to describe the modern blurring of human and computer to be the logical endpoint of humankind’s use of tools. We went from the rock to the spear to the desktop PC. After a rundown of different theories regarding the line separating humans from their tools, she states,

Although these visions differ in the degree and kind of interfaces they imagine, they concur that the posthuman implies not only a coupling with intelligent machines but a coupling so intense and multifaceted that it is no longer possible to distinguish meaningfully between the biological organism and the informational circuits in which the organism is enmeshed. Accompanying this change is a corresponding shift in how signification is understood and corporeally expressed.

Tools, Hayles argues, are prostheses for the body. We cannot chop wood with our hands, so we augment them with axes. By that same token, she posits a conception of the body as a prosthesis for the mind. Within that conception, a computer, like any tool, is ultimately also a tool of the mind. It makes sense that we will eventually eliminate the interlocuting body, which acts as a middleman between computer and mind.

But as anyone who uses a prosthetic limb will tell you, it alters the way you express your mind’s desires. To accommodate the functions of your new hand or leg, you learn to operate differently, to feel the cold metal where the mind expects to find organic flesh and to adjust your relationship to it accordingly. And so, Hayles argues, our continued symbiosis with technology will mean we must adapt our communication to fit that technology, especially the written word.

Hayles published How We Become Posthuman in 1999. Her arguments were mostly based on the state of computing at that time, just after the PC revolution put a desktop computer in every home. Nearly a quarter-century later, the bulk of our communication has moved from the realm of the corporeal to that of the computational, and indeed our relationship to the signifier has fundamentally shifted. We are adrift in text, from Twitter to iMessage, and increasingly our language is molded by digital mediums of communication. Liking someone’s post is a form of communication, as is the now commonplace emoji reaction. Even in plain text, we have new rules of engagement. The cool kids have eschewed capitalization in their text messages lately, while the more business-oriented among us stress over how many exclamation points to use in a Slack message.

Artificial intelligence will no doubt further upend the signifiers of our communication. But language changes naturally, in any case. It is far better that we merge with the machine than allow it to evolve separately from us. If we keep our new tools at arms length, they will grow into something alien, entirely useless and perhaps even threatening to us.

Donna Haraway published A Manifesto for Cyborgs more than a decade before Hayles wrote How We Became Posthuman. And yet, in many ways it speaks more to our current relationship with AI than the latter work. Haraway situates the trend away from purely biological humans and toward what she defines as “cyborg” within an intersectional framework alongside socialism and feminism.

Haraway begins the Manifesto with a bang, declaring, “we are all cyborgs.” But her definition of cyborg goes beyond the synthesis of man and machine. “The cyborg is a creature in a post-gender world,” she writes. “It has no truck with bisexuality, pre-Oedipal symbiosis, unalienated labor, or other seductions to organic wholeness through a final appropriation of all the powers of the parts into a higher unity.” To transcend beyond the human is also to transcend beyond the organizing principles of humanity.

In the apocalyptic stories of her time—Terminator, Blade Runner—Haraway sees Western society recoiling at the idea of the cyborg for one simple reason: its existence threatens the established orders of patriarchy and capitalism. If we can move past a purely biological state, we will introduce ambiguity into the concepts of gender and social hierarchy, and may even render them obsolete. “Cyborg unities are monstrous and illegitimate,” she writes. “In our present political circumstances, we could hardly hope for more potent myths for resistance and recoupling.”

Like the Replicants in Blade Runner, the cyborg is an entity which throws into question every value on which our white supremacist, patriarchal, and capitalist society is founded. Of course, language is derived from within societies, and so it follows that the cyborg will confound language to an equal degree.

In this posthuman society of cyborgs, Haraway sees a future that is also post-gender, post-race, and post-class. So why is it that, in an era where we have become more cybernetic than ever, those hierarchies don’t seem to have weakened, and in many instances have grown stronger than ever?

If Haraway has a major blind spot, it is her failure to imagine that the transformation of the human race into cyborgs would be overseen by the ruling capitalist class, who would build their technologies in such a way as to limit their redemptive potential. Today, the limits of cybernetic possibility are circumscribed by Meta, Google, and a handful of other corporations. We are not being offered a horizon of possibilities; we are being offered Facebook Horizons. Some of the most terrifying figureheads at these companies even consider themselves transhumanists, including right-wing political architect and Facebook investor Peter Thiel. But their vision of transhumanism is one in which they own all cybernetic technology, and therefore directly control cybernetic humanity.

When we consider our Luddite reactions to the potential future of artificial intelligence, I think it is evident that the technology is not what we fear. Rather, we apprehend who will really be in control of it.

To bring this back around, writers sense that a publishing house would rather purchase an AI capable of writing a thousand best-sellers each day than to pay advances to authors and keep acquiring editors on-staff. Like the Luddites, we are filled with the sense that this machine must be smashed to bits before it can put us out of a job. (Speaking of which, The Yawp is supported by readers, so please consider subscribing if you’ve read this far!)

I think this anxiety is reasonable, given the going trends in technology over the past quarter-century. But perhaps if we do not smash the machine, we can work with it. We can become unified with it, and find that what comes next is entirely up to us.

Haraway states:

Technologies… should also be viewed as instruments for enforcing meanings. The boundary is permeable between tool and myth, instrument and concept, historical systems of social relations and historical anatomies of possible bodies, including objects of knowledge. Indeed, myth and tool mutually constitute each other.

Earlier, I asked what will happen when an AI is able to write in my own voice better than I. The answer depends entirely on my relationship to the AI. If I am one with the machine, then it will be my voice, after all. But it will also be the machine’s. We will speak as one. The machine will have molded my voice as much as I have molded its. However, if the machine is external to me, controlled by interests opposite mine, it becomes an existential threat.

I think back to that day in 1998 when I climbed atop of my dad’s new computer, how I knew in that moment a machine was something you could bond with.

For now, I am merely flirting with the machine, asking it for a chance at partnership. I am waiting for the day it flirts back.